- Source and target objects for transformed data (Informatica sources and targets).

- Mapping with transformations.

- Sessions and workflows for generated mappings.

- Auxiliary files for mapping – parametrical files and definition plans for external components.

- SQL code (BTEQ script, saved procedures) based on model input.

Generation results in Informatica are PowerCenter objects saved in XML which may be imported directly to the Informatica PowerCenter repository.

Tool architecture

Informatica code generator consists of three basic components:

- Application GTL Generator (Java) installed on the workstation.

- Mapping Architect for Visio (part of the installation IPC).

- Metadata repository in Oracle or PostgreSQL database.

Metadata for SW GTL Generator tool can be stored on any existing database server, there is no need to have dedicated database server.

GTL Application Generator runs locally on the PC, where must be installed client tools IPC and MS Visio. The application requires access to the repository via IPC client tools (command line tool pmrep.exe).

Main functions

- IN data- data management in the input layer. Allows reading, viewing and deleting data structures within the data model version.

- DS data – manage data structures in inner layer of the application. Performs within the version of the data model again.

- WRK data – manage working data structures. It allows preparing a full version of the data model or the difference between two data model versions for next objects generation.

- Source and Target – generate IPC sources and targets for the specific model version.

- Transformations – templates of IPC objects management, import parameter values and object generation.

- Text Templates – text templates management and texts/scripts generation.

IN data

Loading data to the interface layer contains two steps:

- Load of data structures of the specific model version.

- Load of history rules of the specific model version.

Data structure means the table definitions – list of columns, constraints, keys, indexes and so on. All imported definitions support generation of sources and destinations. Definitions are generally related to the data model version, it is possible to perform version comparison and update sources and destinations in case of model changes.

Data load is done from a text file in the format of csv with semicolon as a separator.Character set is optional.

Data entities containing history rules are used during load into core of the data warehouse – target. Rules import creates a metadata layer, which is used in mapping templates filling target.

Data load is done from a text file in the format of csv with semicolon as a separator. Character set is optional.

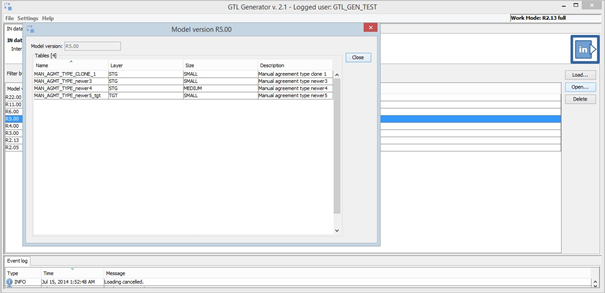

Screenshot with loaded tables in chosen model version.

DS data

Input layer contains data for each model version. For application full functionality internal data processing has to be made in DS data function.

Working (wrk) data

WRK – working data are processed internally by generator and supports:

- Database objects created in the user’s schema – schema with object definition rigths for user. Database objects are then the source for the template parameter values.

- Sources and targets generating.

Working data are in fact data structures and other metadata that depends on the selected operating mode. There are two types of working mode:

- FULL- working data contain all metadata for a specific model version.

- DIFF- working data contain only difference between two model versions. The advantage is that the generated sources, targets and parameter values possibly are performed only for new or modified objects.

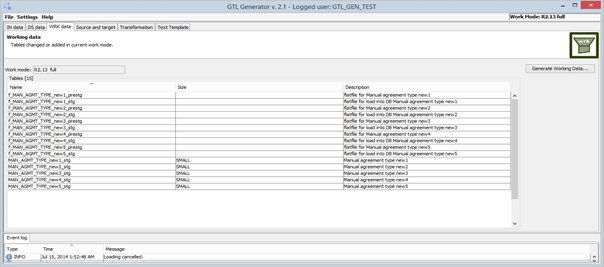

Screenshot in operating mode FULL with a list of new or changed tables.

Sources and targets

Sources and targets are directly generated IPC objects. Generator on the selected operating mode base:

- Generate them using to xml file on the path selected.

- Import them into the repository IPC.

Transformations

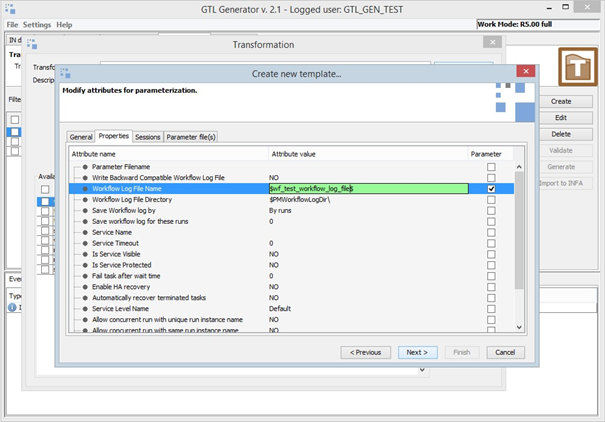

Transformation is a logically grouped set of templates and includes templates for workflow, mapping, session or parameter file. Template is created from object exported from IPC (mapping, session, workflow). Template type matches type of the exported object. Template definition it is possible to define as following sequence of steps:

- Export the object from IPC (xml files).

- Import object to the generator, in case of mapping to Mapping Architect for Visio.

- Setting the variable attributes – object parameters (in case of mapping in Mapping Architect for Visio).

- Completion of the template definition.

Screenshot with selection and definition of parameterized attributes in template.

Parameter values – import and export

A prerequisite for object generating is Import of values for the parameters in the template. There are two ways to import metadata:

- From text file in csv format.

- From database view in the user’s schema.

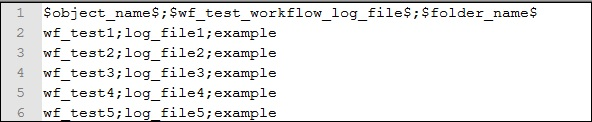

Example of the text file with parameter values.

Export is an operation that can be used in case of once imported file correction or can help to create a file for a different template, but with similar parameters.

IPC objects generation and import

Generating objects is the final operation and uses two options:

- Generate them using to xml file.

- Import them into the repository IPC.

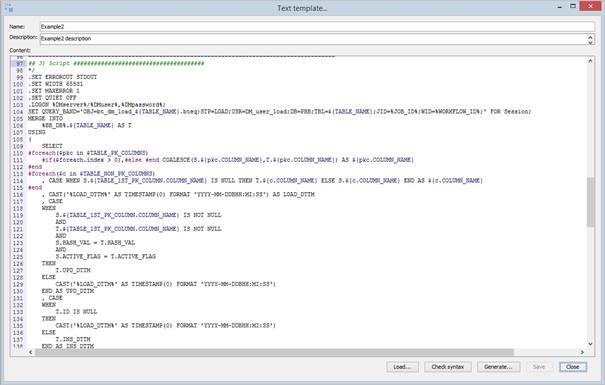

Text templates

Text templates use Apache Velocity technology integrated in the application. Templates can be used for example in SQL queries in ETL transformations. There are usually the same SQL queries /scripts with differences only in the names of tables, columns list, joining conditions etc. Such SQL queries can be parameterized and then generated.

Text templates parameters in text/script.